Documentation Index

Fetch the complete documentation index at: https://docs.memanto.ai/llms.txt

Use this file to discover all available pages before exploring further.

MemantoClaw

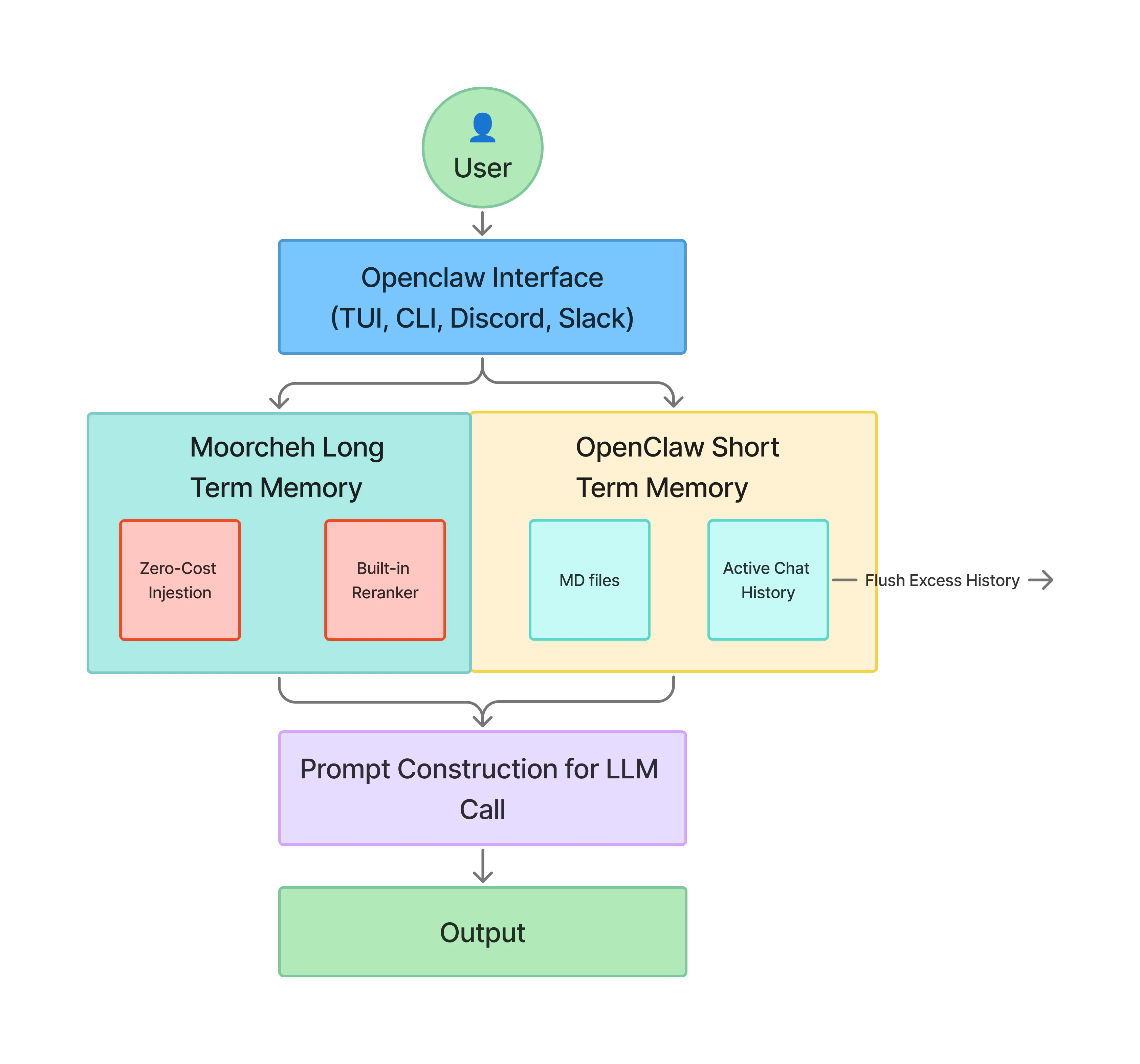

MemantoClaw is an open-source reference stack that simplifies running OpenClaw always-on assistants safely with built-in long-term memory. It combines three core technologies:- Autonomy (OpenClaw): A powerful open-source agent framework.

- Security (NVIDIA OpenShell): A hardened sandbox that restricts network egress and file access.

- Memory (Memanto): A long-term memory architecture powered by Moorcheh that carries context across sessions.

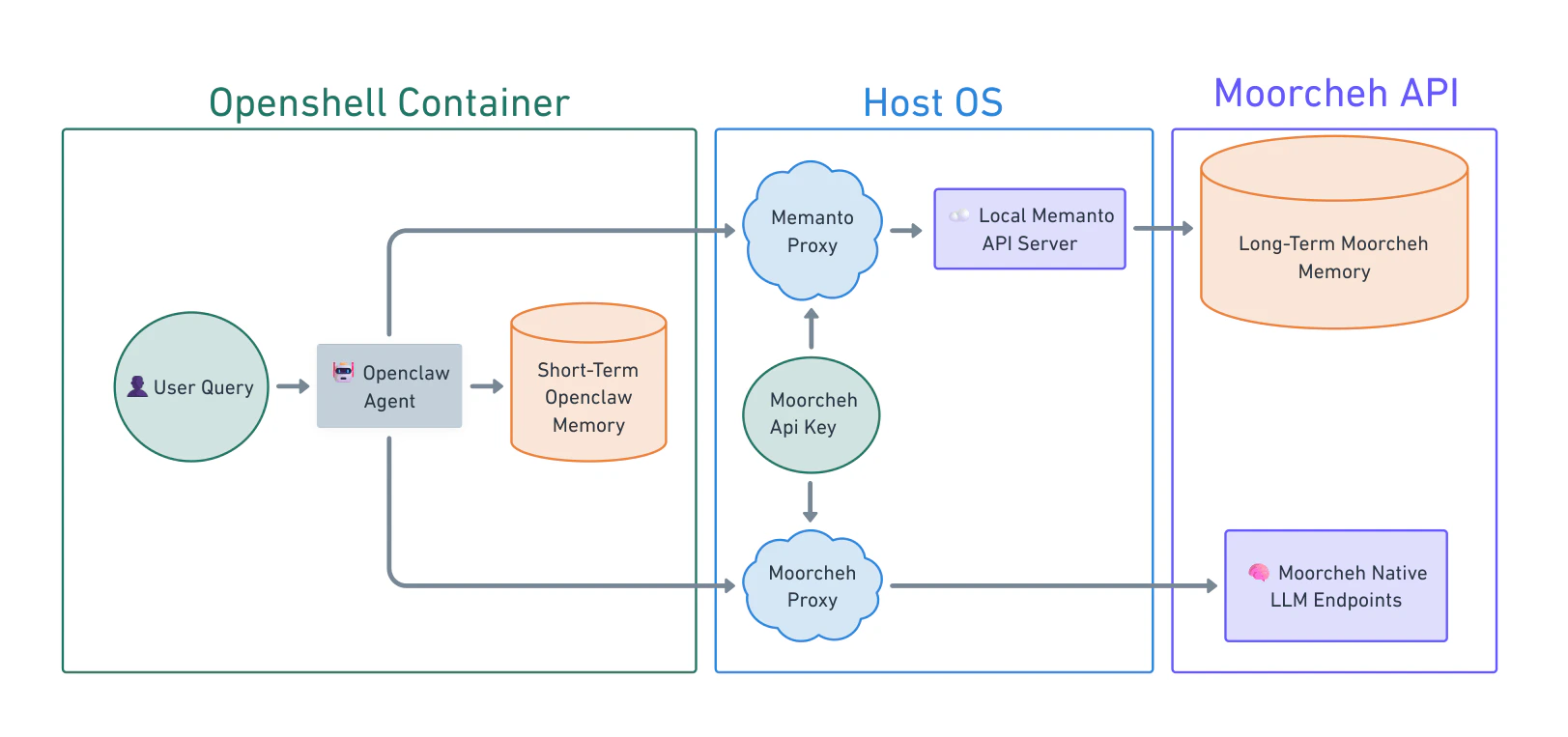

🏗️ Architecture

- The host manages credentials and provider routing to long-term memory services.

- The sandbox runs OpenClaw under OpenShell policy enforcement.

- The agent receives only the context it needs for each task, not raw host credentials or memory databases.

The Ecosystem and How the Stack Fits Together

Three pieces usually appear together in a MemantoClaw deployment, each with a distinct scope:| Project | Scope |

|---|---|

| OpenClaw | The assistant: runtime, tools, memory, and behavior inside the container. It does not define the sandbox or the host gateway. |

| OpenShell | The execution environment: sandbox lifecycle, network, filesystem, and process policy, inference routing, and the operator-facing openshell CLI for those primitives. |

| MemantoClaw | The reference stack that implements the definition above on the host: CLI and plugin, versioned blueprint, state migration helpers, and Moorcheh memory bridge. |

MemantoClaw Path versus OpenShell Path

Both paths assume OpenShell can sandbox a workload. The difference is who owns the integration work.- MemantoClaw path: You adopt the reference stack. MemantoClaw’s blueprint encodes a hardened image, default policies, Moorcheh integration, and orchestration so

memantoclaw onboardcan provision a validated environment with minimal manual configuration. - OpenShell path: You use OpenShell as the platform and supply your own container, install steps, policy YAML, provider setup, and any host bridges.

What MemantoClaw Adds Beyond the OpenShell Community Sandbox

| Capability | openshell sandbox create --from openclaw | memantoclaw onboard |

|---|---|---|

| Sandbox isolation | Yes. OpenShell applies seccomp filters, Landlock, privilege dropping. | Yes. MemantoClaw applies these and layers a more restrictive policy. |

| Credential handling | You create providers manually. | Creates providers automatically and filters sensitive host env vars. |

| Image hardening | Standard system tools included. | Strips build toolchains (gcc, make) and network probes (netcat). |

| Filesystem policy | Bundled policy for OpenClaw. | More restrictive read-only/read-write layout. Gateway config is immutable. |

| Inference setup | Manual configuration. | Wizard validates credentials, configures routing automatically. |

| Memory integration | Manual vector DB provisioning required. | Zero-config Memanto integration via Moorcheh. |

The Memanto Advantage

Unified API Key for Memory and Inference

MemantoClaw simplifies credential management by bundling both long-term memory access and native LLM inference into a single API key. Instead of juggling separate keys for your vector database (or Moorcheh memory service) and your LLM inference provider, your Memanto API key authenticates both. When you runmemantoclaw onboard, you provide this one key, and MemantoClaw automatically configures the OpenShell inference gateway to proxy your LLM requests while simultaneously enabling the zero-config memory bridge.

Secure and Real-time Memory

By leveraging Moorcheh’s infrastructure, the Memanto memory layer offers zero-wait ingestion (no indexing delays) and a secure host-bridge architecture where memory stays safely on Moorcheh, and the sandbox only receives specific retrieved context.Deep Dive: How It Works

For complete, unabridged technical details on this topic, refer to the official NVIDIA NemoClaw Documentation. Portions of this guide are summarized and adapted from NVIDIA Corporation (Copyright © 2026), licensed under the Apache License, Version 2.0.